Often late and costs a pretty penny: do impact evaluations meet the opportunity window?

We have witnessed an increase in the number of impact evaluations of development programmes in the past decade. Yet you often hear people say ‘they are too expensive’ and many of the evaluations are considerably delayed and miss the window of opportunity to influence policy.

How much does an impact evaluation cost?

Are they on time, generally? Can they be used to inform policy?

The most pragmatic answer is ‘it depends’!

As we explore in a recent IEU working paper, the answers depend on where impact evaluations are conducted and what they are measuring. In the paper, we examine the first cohort of impact evaluation grants made by the International Initiative for Impact Evaluation (3ie). Forty-five grants were made to organizations to undertake impact evaluations in real-world settings with all the political, data, implementation and resource constraints experienced by development agencies. Importantly, unlike laboratory experiments where researchers have control over the implementation of the project AND the impact evaluation, in real-world impact evaluations, researchers have little control over implementation and other contextual factors. The experience from implementing these, we feel, is far more relevant when it comes to development (and climate!) organizations that plan to consider impact evaluations (consider, for example, the IEU’s LORTA programme).

Costs of conducting real-world impact evaluations

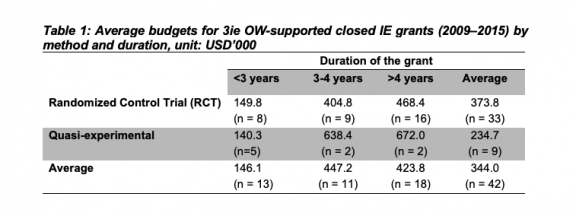

The cost of conducting impact evaluations depends on the programme being evaluated, its study design, sample size, the desired effect size, other outcomes of interest and the local context, etc. On average, 3ie funded impact evaluations cost about USD 334,000 (see Table 1).

Disaggregating grants by design and length of the study shows that the ex-post studies that used quasi-experimental designs cost the least. This is expected, as some of these designs do not collect baseline data, or indeed any survey data at all, and some do not undertake qualitative work (note that low cost does not necessarily equate to a low level of robustness or rigour). Multi-year studies with multiple rounds of data collection using mixed methods cost USD 395k over the lifetime of the evaluation.

When we disaggregated the data by region, we found that it is cheaper to carry out impact evaluations in Africa and Latin America, and more expensive in South Asia.

Again this is not surprising: One of the key cost drivers in impact evaluations is the cost of surveys. The average survey cost for a typical 3ie grant was USD 133k, which represented 40% of the average budget for an impact evaluation. The average increases to USD 176k for multi-year, multi-round survey studies. The other major cost driver in IEs are personnel costs used to pay high-quality research staff required for conducting the IEs. Personnel costs cover the time and effort put in by study teams in designing real-world evaluations and surveys, analysing data, writing of the report and the dissemination of findings. On average, 3ie grantees spent on average USD 109k on personnel costs. Average expenses on travel was USD 19,822.

Timeliness of real-world impact evaluations

We defined ‘delay’ in the paper as not being able to submit the revised final impact evaluation report on the originally agreed date. On average, 28 per cent of the IE grants were delayed for more than a year and the delay was higher for studies that were initially planned for short durations. We identified the following causes for delays:

- Initial delays because of lack of engagement: While all grants selected for 3ie financing had to include letters of agreement from the government or implementing agency, most research teams from the early 3ie grant windows started engaging in earnest with the implementing agency only after having been notified of the 3ie award. This meant that there were changes in many grants because the implementing agency had not internalized the full implications of undertaking an impact evaluation in the implementation of their project. (3ie has now has built in a 3-6 month formative research grant period into its grant timelines).

- Process and political delays: Several impact evaluations were delayed due to programme implementation delays and political reasons beyond the control of the research team.

- Low take-up and the risk of underpowered studies: In several studies, we witnessed a lower take-up of the programme than had originally been anticipated by research teams. Lower-than-expected uptake meant that sample sizes were too small for robust measures of impact. The study team had to think of creative ways to increase uptake or alter the intervention altogether, all of which takes time.

- Implementation obstacles: In several studies, grantees encountered implementation obstacles such as messaging software or bank transfers not working. These are the kind off issues that could have been avoided had there been pilot testing beforehand. Most grant windows (including 3ie and IEU’s LORTA) build in a first formative phase to minimize the risk of such implementation and uptake obstacles.

- Surveys and data analysis: Most impact evaluation studies collect primary data for their results. In many cases, study teams underestimate the amount of time required to plan, train for and roll out surveys, and also underestimate the time needed to clean data, do double data entry and analyse data.

- Quality of study reports: Despite several checks and balances and report guidance shared with the teams up front, poorly written study reports often get submitted. Most submitted first drafts lacked one or more of the following details: balance tables; an adequate theory of change; description of the intervention; investigation of the literature; description of attrition and spillover effects; sensitivity tests to different specifications; discussion of results; and, last but not least, actionable policy recommendations. In such cases, these studies are sent back and detailed revisions are requested.

Conclusions and implication for future IEs

Real-world impact evaluations experience many obstacles. In many cases, such obstacles are beyond the control of the study team or the implementation team or the funder. There are some risks however, that can be controlled and mitigated up front:

- Prior formative research: Good formative research can help reduce risks in both critical bottlenecks and in unexpected challenges in hypothesized theories of change, technologies and mitigate problems of low uptake. Formative research also has the (unintended till recently) consequence that it gets the research team to interact far more with project implementation teams during the early stages of planning.

- Closer monitoring of projects and impact evaluations: Close monitoring of projects and of the implementation of impact evaluations can reduce delays. In most cases, it helps if IE teams have someone located in the project (while not interfering too much.)

- Building-in flexibility: Building flexibility in timelines can help organizations and specifically funders manage their own expectations and ensure that teams are protected from reputational concerns. Funders should specifically build in flexibility into timelines while also accommodating changes in designs, pre-analyses plans and impact evaluation questions when necessary. Given the long timelines for most impact evaluations, flexibility in timelines will also help teams manage turnover that inevitably occurs within teams.

- Manage cost overruns: Building in a strong financial due-diligence process can help in the management of cost overruns. On average, 3ie grants did not face any overrun, but a minor underspend. This was due to the rigorous budget review process where personnel, surveys, travel and other costs are clearly benchmarked against past studies in a comparable context

Have questions or comments about this blog? Join the conversation on Twitter with the hashtag #IEUBlog

Disclaimer: The views expressed in blogs are the authors' own and do not necessarily reflect the views of the Independent Evaluation Unit of the Green Climate Fund.