Developing evaluation policies - IEU’s seminar at the United Nations Evaluation Group Annual Week

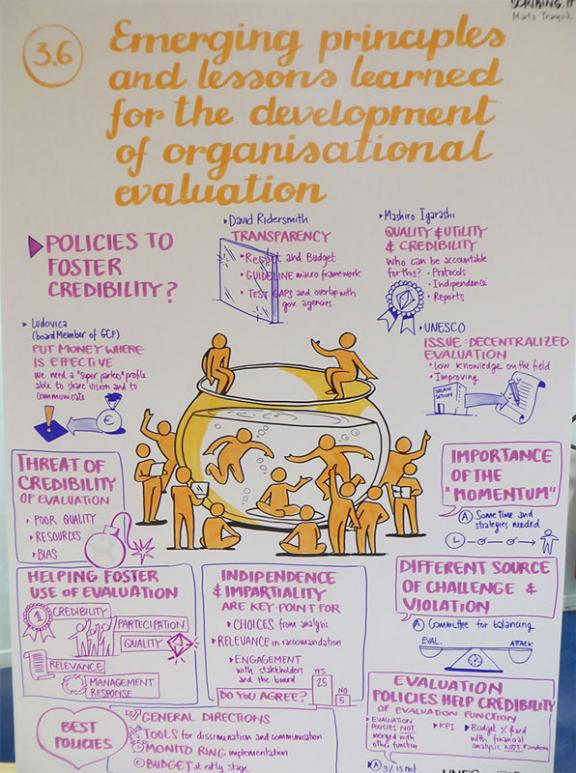

The Independent Evaluation Unit of the GCF (IEU-GCF) convened a seminar that discussed 'Emerging principles and lessons learned for the development of organized evaluation policies' at the United Nations Evaluation Group (UNEG) Week convened in Rome between 7th and 11th May.

Chaired and moderated by IEU Head, Dr. Jyotsna Puri (Jo), the discussion included David Rider Smith, Team Leader of the Joint Inspection Unit of UN System, Masahiro Igarashi, Director of the FAO Office of Evaluation, Ludovica Soderini, GCF Board Member, Amir Piric, Chief of UNESCO’s Evaluation Section, and Susanne Frueh, Director of UNESCO’s Internal Oversight Service. Each panelist spoke about three aspects related to the design of evaluation policy:

- Credibility and measurement within evaluations;

- Usefulness of evaluations

- Impartiality and independence of evaluations.

The discussion used Chatham rules, which disallows individual attribution of comments. Participants, however, were encouraged to share their main takeaways outside the room, after the event. This article reprises some elements of the discussion. A video from the event also provides opinions from a number of participants on the role of evaluation in achieving the Sustainable Development Goals.

One question asked of the panel was: to what extent have evaluation policies helped foster credibility and measure results? During the ensuing discussion, participants discussed the absence of an evaluation policy at UNDP until 2006. In 2006, the new evaluation policy examined and built criteria for credibility, independence, quality, and timeliness. The presence of an evaluation policy also helps address such questions as: who is accountable for evaluation reports, who sets guidelines for quality, who ensures the evaluation’s quality and who ensures that evaluations are evidence-based.

Another question asked of the panel was to describe what helped foster the use of evaluations. One delegate said for evaluations to be useful they need to be independent and well discussed with management and stakeholders. Delegates also agreed that data used in evaluation should be evidence-based to enhance credibility. Panelists agreed that evaluations should not be critical, but rather, identify problems and suggest solutions. One participant said the participation of key stakeholders is very important, suggesting one way to do this is to have a reference group. This requires a large investment, but it can ensure evaluations are useful and relevant. Communication with all stakeholders is also critical. For another participant, it was vital that the use of evaluations was explained to all the stakeholders involved. Other issues raised during the discussion included how evaluation policies reflect an organization’s culture, how to ensure quality in evaluations, how to ensure the quality of decentralized evaluations, the need to strengthen monitoring and evaluation and the importance of building capacity for good data collection within projects. All of this valuable information is important grist for the IEU mill. The knowledge gained from sharing ideas, learning how other specialists approach issues, and hearing people’s different perceptions on what constitutes credibility, usefulness and impartiality in an evaluation, is extremely valuable to IEU as it begins to roll out a draft evaluation policy for the GCF. With this knowledge, IEU will be able to produce a more informed, more inclusive policy that will better guide future evaluations of the GCF’s climate actions.

By Greg Clough, Communications and Uptake Consultant